Document 1

Document 1

1982

Act

Information

Official

the

under

Released

_____________

_____________

1982

Act

Information

Official

the

under

Released

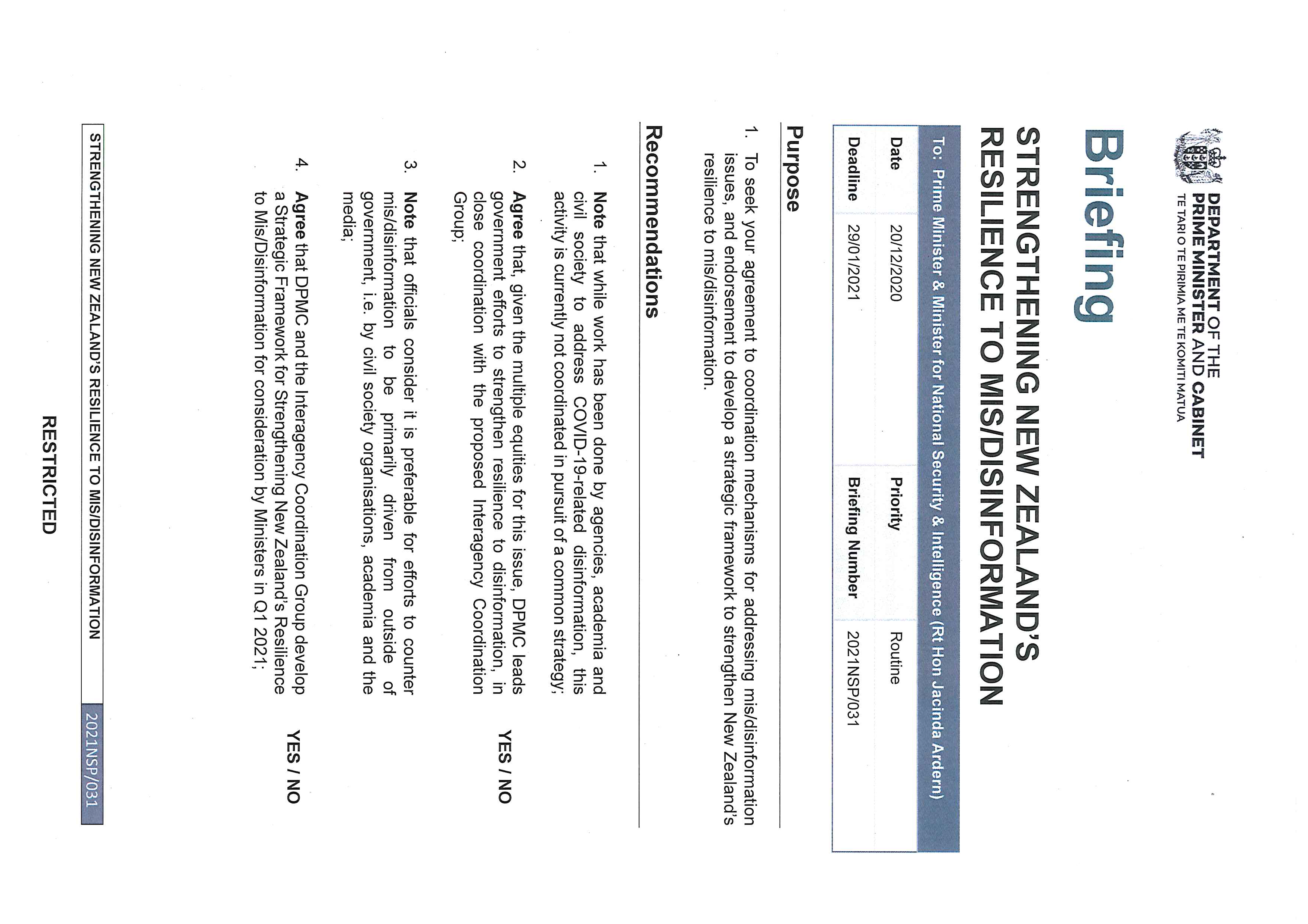

_____________

1982

Act

Information

Official

the

under

Released

____________

1982

Act

Information

Official

the

under

Released

____________

_____________

1982

Act

Information

Official

the

under

Released

_____________

____________

1982

Act

Information

Official

the

under

Released

_____________

_____________

1982

Act

Information

Official

the

under

Released

_____________

1982

Act

Information

Official

the

under

Released

1982

Act

Information

Official

the

under

Released

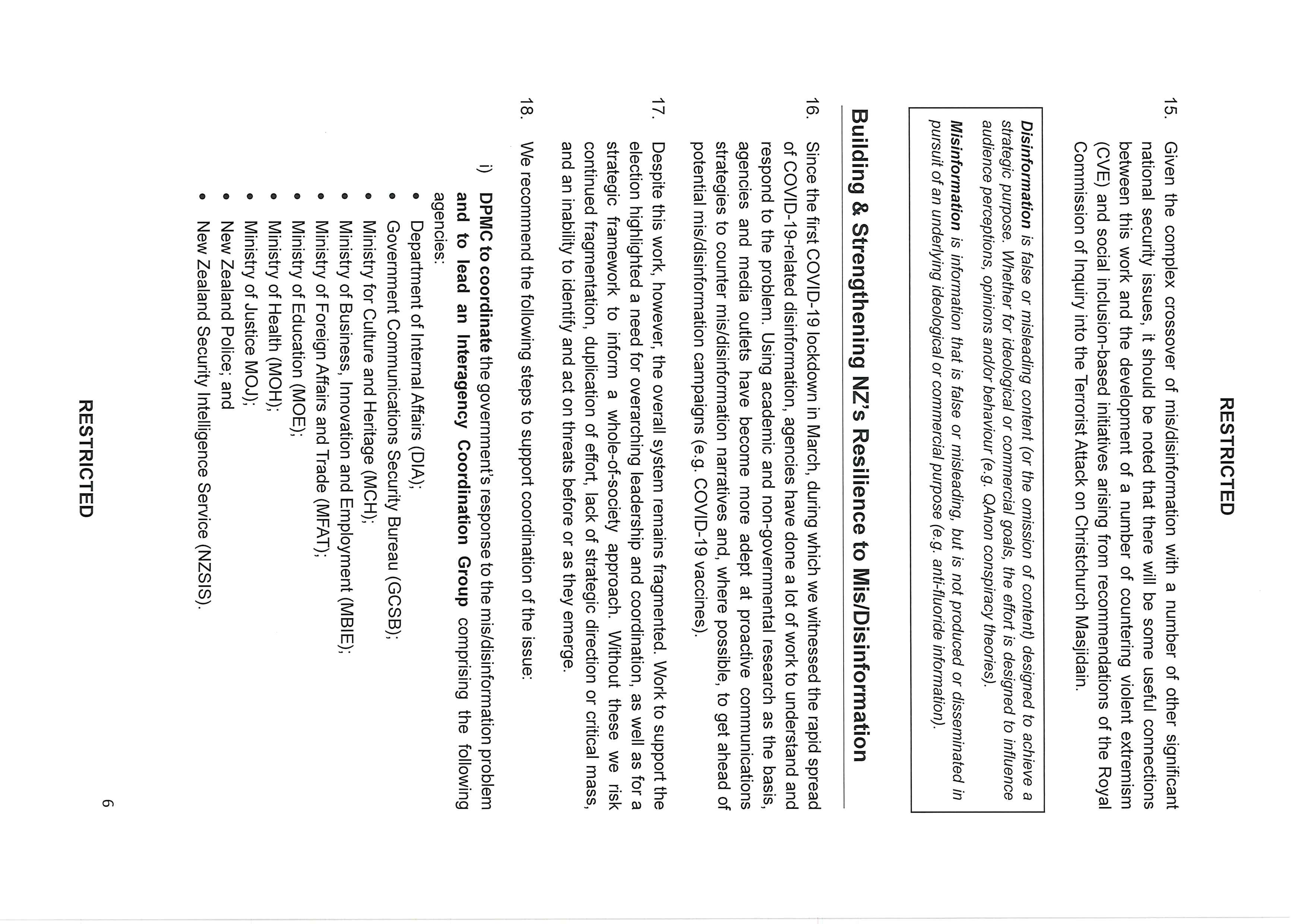

RESTRICTED

• Google released a new COVID-19 information policy for YouTube;

• Twitter has implemented new policies for flagging disinformation content – including

COVID-19 and elections-related disinformation – and makes available disinformation

and accounts it has removed available for research; and

• Microsoft has launched new technologies targeting disinformation – NewsGuard and

Video Authenticator, as part of its Defending Democracy programme.

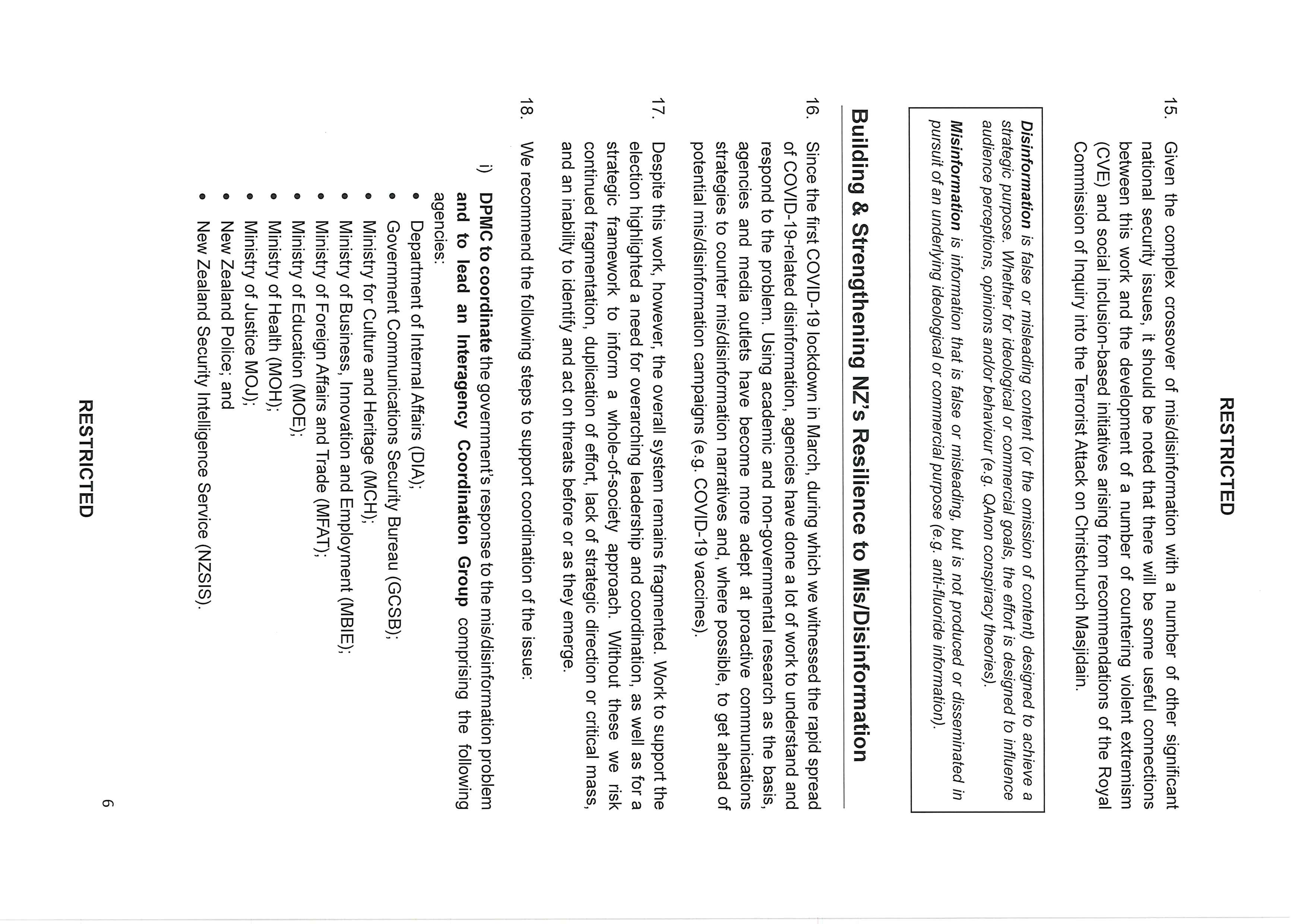

…and mis/disinformation more general y

3. Meanwhile, mis/disinformation more generally also gained a lot of public attention in

New Zealand during the past few months with media organisations such as Stuff,

1982

Newsroom NZ, and The Spinoff, and independent organisations such as Netsafe3 and

InternetNZ drawing attention to the problem via public campaigns. These independent

narratives are especially welcome and helpful, as they are less likely to be associated

Act

with conspiracy theories about state control than if they came from a government agency.

4. Major platforms have also reached out to New Zealand on disinformation issues, through

Ministers and senior officials. This has included engagement as part of Christchurch Call

implementation, in relation to the October General Election, and material relating to the

COVID-19 pandemic. s9(2)(ba)(i)

Information

5. Similarly, a range of civil society organisations and social enterprises specialising in

disinformation issues has reached out to New Zealand officials to offer engagement and

support on disinformation and related issues. s6(b)(ii)

Official

Meanwhile, the Classification

Office is currently developing an in-depth survey to help inform a better understanding of

the

public attitudes towards and perceptions of disinformation.

6. In respect of central government activity, processes were put in place by DPMC, the

Ministry of Justice (MOJ) and the intelligence agencies for addressing elections-related

under

disinformation. And looking ahead DIA, with the Ministry for Culture and Heritage (MCH),

is scoping a potential review of media content regulation, which may provide an

opportunity to address policy issues relating to mis/disinformation. However, DIA and

MCH are still at the early stages of this work, and the commencement and scope of a

potential review would depend on Ministerial responsibilities and priorities. A more

immediate response for some aspects of mis/disinformation may be needed than could

be delivered by the media content review.

Released

3 In August, as part of their awareness campaign to help people understand and recognise mis/disinformation,

Netsafe released the result of a national y representative survey of New Zealanders’ perceptions of fake news.

https://www.netsafe.org.nz/yournewsbulletin/

10

RESTRICTED

RESTRICTED

7. In related work, as noted in the Government’s response to the report of the Royal

Commission of Inquiry, MOJ is progressing work around possible new hate speech/hate

crime legislation, and work is underway to establish a National Centre of Excellence to

focus on diversity, social cohesion, and preventing and countering violent extremism.

We need coordination in order to strengthen New Zealand’s resilience to

mis/disinformation

8. Despite the work that has been undertaken and is ongoing, the system remains

fragmented. It is lacking overarching leadership and coordination, an enduring monitoring

1982

capability (especially for non-COVID-19 related issues), a policy and referrals framework,

and guiding principles.

Act

9. Without these we risk continued fragmentation, duplication of effort, lack of strategic

direction or critical mass, and an inability to identify and act on threats before or as they

emerge. Consequently, in the absence of change, there would continue to be a range of

ad hoc activities that do not contribute to a strategic goal of building New Zealand’s

resilience to mis/disinformation.

We recommend the following steps to ensure

coordination of the issue:

Establish a workstream lead and an Interagency Coordination Group

Information

10. While responsibility for managing dif erent aspects of mis/disinformation will inevitably

remain dispersed, the strategic oversight of lead agencies is necessary to ensure relevant

stakeholders are connected and coordinated, and their actions align with the delivery of

the wider policy direction and, if necessary, response.

Official

11. While the issue of mis/disinformation crosses multiple portfolios, there are some agencies

that wil be better placed to take the lead on this issue, in particular:

the

• DPMC, as the central agency for coordinating the national security system and host

agency for the Strategic Coordinators that address cross-government work

programmes on significant, related national security issues (Cyber Coordinator,

Foreign Interference and Counter-Terrorism). DPMC is also home to the Prime

Minister’s Special Representative on Cyber and Digital, acting as a senior-level

under

interface with the technology and digital sector on security and public safety; and

• DIA, as the lead agency for Digital Safety and Countering Violent Extremism Online.4

DIA administers the Films, Videos and Publications Classifications Act 1993 (and is

therefore responsible for censorship policy), hosts the Digital Safety Group and

Government Chief Digital Officer and, with the Ministry for Culture and Heritage

(MCH), is at the early stages of scoping a potential review of the media content

regulatory system.

Released

4 DIA and DPMC also jointly lead the government’s Preventing and Countering Violent Extremism (PCVE) work

programme, which wil have significant overlaps with mis/disinformation.

11

RESTRICTED

RESTRICTED

12. There are potential complications with any one government agency taking the lead. For

example, the perception of an agency such as DIA, which is involved in censorship and

compliance, leading the government’s response to mis/disinformation may reinforce

conspiracy theories about state control of media. However, this kind of narrative is likely

to surface within conspiracy theory circles regardless of which agency is involved.

13. There are also potential resource constraints for agencies picking up new workstreams.

s9(2)(g)(i)

As there is a

need to push ahead with this work as a matter of priority, however,

we recommend that

1982

DPMC leads this workstream, at least in the short term, working col aboratively with

a group of relevant agencies to coordinate the whole-of-system response.

Act

14. DIA recently convened a meeting of relevant agencies to consider this paper and we

recommend that these participating

agencies should form the basis of an Interagency

Coordination Group: DIA, DPMC, GCSB, MBIE, MFAT, Ministry of Culture and

Heritage, Ministry of Education, Ministry of Health, Ministry of Justice, and NZ

Police. The intention is that this group will begin to monitor (within existing resources)

current mis/disinformation risks, start to build connections with non-governmental

partners and, where required, inform and coordinate public communications responses.

Identify a Group of Ministers

Information

15. Sitting above the agencies,

we also recommend that rather than having a single

Minister responsible for disinformation issues, there should be a group of relevant

Ministers to whom issues can be flagged. Given the nature of the challenge, it is more

appropriate to spread the issue across several portfolios.

Official

16. Rather than this group meeting on a formal or regular basis, the Interagency Coordination

Group will escalate or flag issues to the group of Ministers as necessary, seeking

decisions on more sensitive policy and communications issues, and ensuring the

the

Government is well informed on mis/disinformation trends.

17.

We recommend that the Ministerial group should comprise:

under

•

Minister for National Security & Intel igence, Rt Hon Jacinda Ardern

Mis/disinformation gives rise to and impacts several national security risks, for which the

Minister for National Security & Intelligence has responsibility. The response to

mis/disinformation wil also have implications for other work streams in the national

security portfolio, including countering violent extremism and foreign interference.

•

Minister of Education / Minister for COVID-19 Response, Hon Chris Hipkins

Released

A key avenue for strengthening resilience to disinformation is through the development

of effective education programmes that ensure continued effort on building science and

numeracy literacy, and new areas of focus around critical thinking and media literacy.

12

RESTRICTED

RESTRICTED

Disinformation is also one of the most significant risks to our COVID-19 response and

the effective roll-out of a vaccine.

•

Minister of Health / Minister Responsible for the GCSB & NZSIS,

Hon Andrew Little

The Ministry of Health is the lead agency for the COVID-19 vaccine, which is likely to

present our greatest disinformation challenge over the coming year. Many disinformation

narratives may be generated by and/or spread by state actors, which means the

intelligence agencies wil play a key role.

1982

•

Minister for Broadcasting and Media / Minister of Justice, Hon Kris Faafoi

The Minister for Broadcasting and Media, along with the Minister for Internal Affairs, is

Act

scoping a review of content regulation which may have overlaps with this work on

disinformation. While yet to be finalised, a review of the way content is regulated in

New Zealand would seek to address gaps and inconsistencies in the current content

regulation framework. The Minister for Broadcasting and Media is also responsible for

work programmes that aim to build a strong and independent media sector which may

assist in providing accurate sources of information to counter dis/misinformation. This

includes the Strong Public Media Programme which is assessing the viability of

establishing a new public media entity and the Investing in Sustainable Journalism

Information

initiative which aims to protect public interest journalism.

•

Minister of Internal Affairs, Hon Jan Tinetti

In addition to scoping the potential review of media content regulation with the Minister

for Broadcasting and Media, the Minister of Internal Affairs also has responsibility for

Official

Digital Safety (including CVE online), the Films, Videos and Publications Classification

Act, and setting and monitoring the strategic direction of independent Crown Entity the

Office of Film and Literature.

the

•

Minister for the Digital Economy and Communications, Hon Dr David Clark

The Minister for the Digital Economy and Communications is responsible for the digital

safety work programme, which includes efforts to counter a range of online harms and

under

promote online safety.

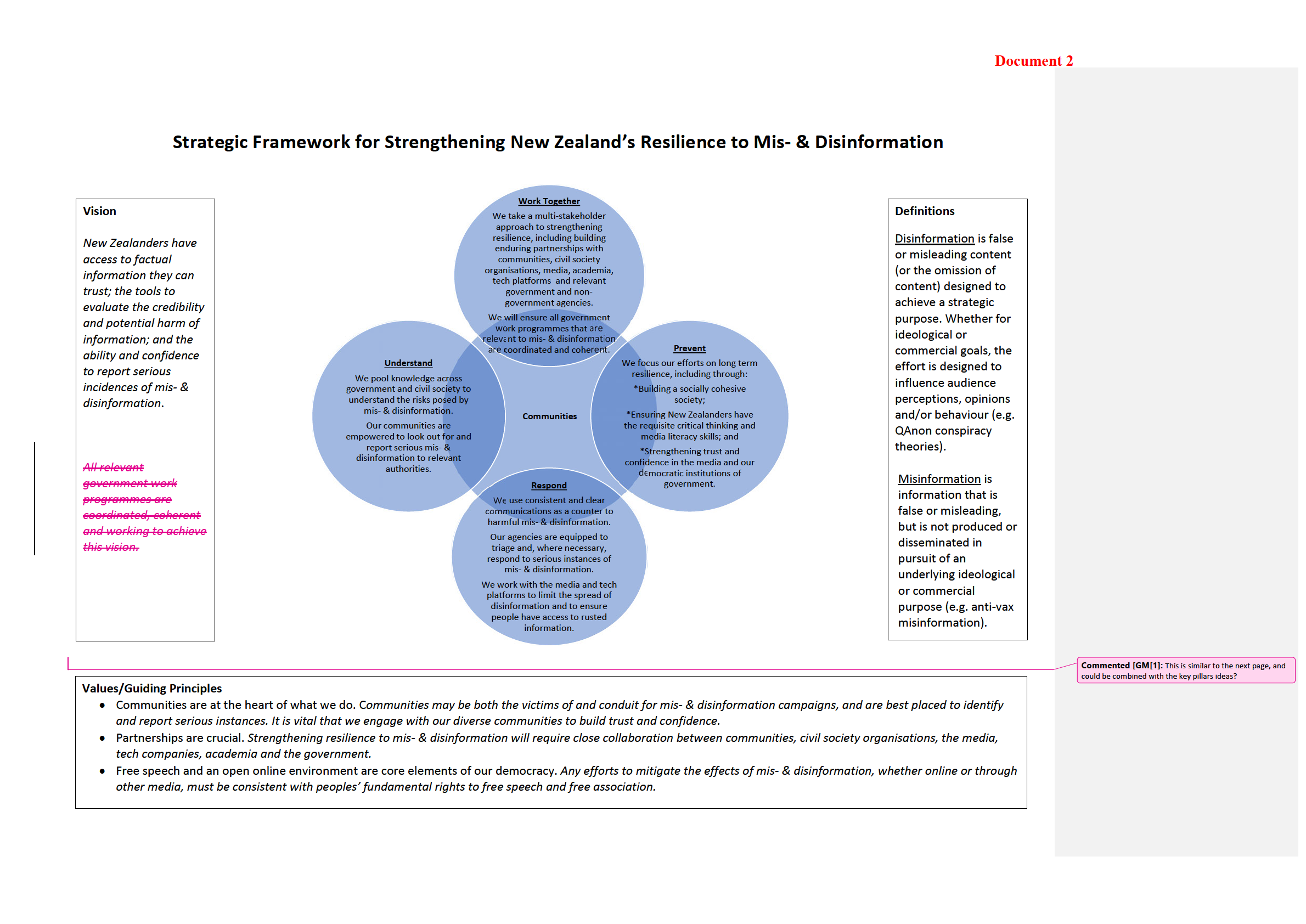

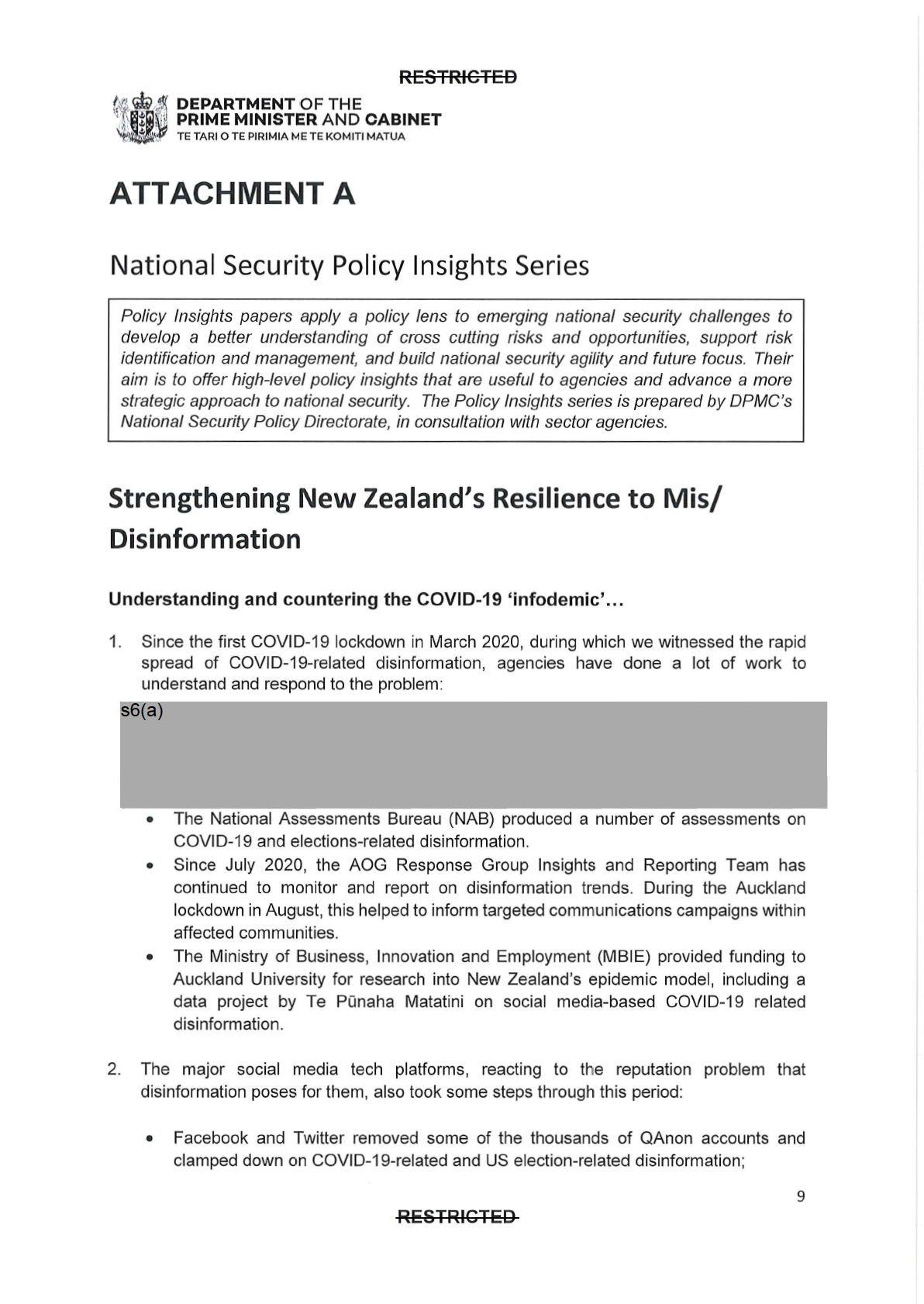

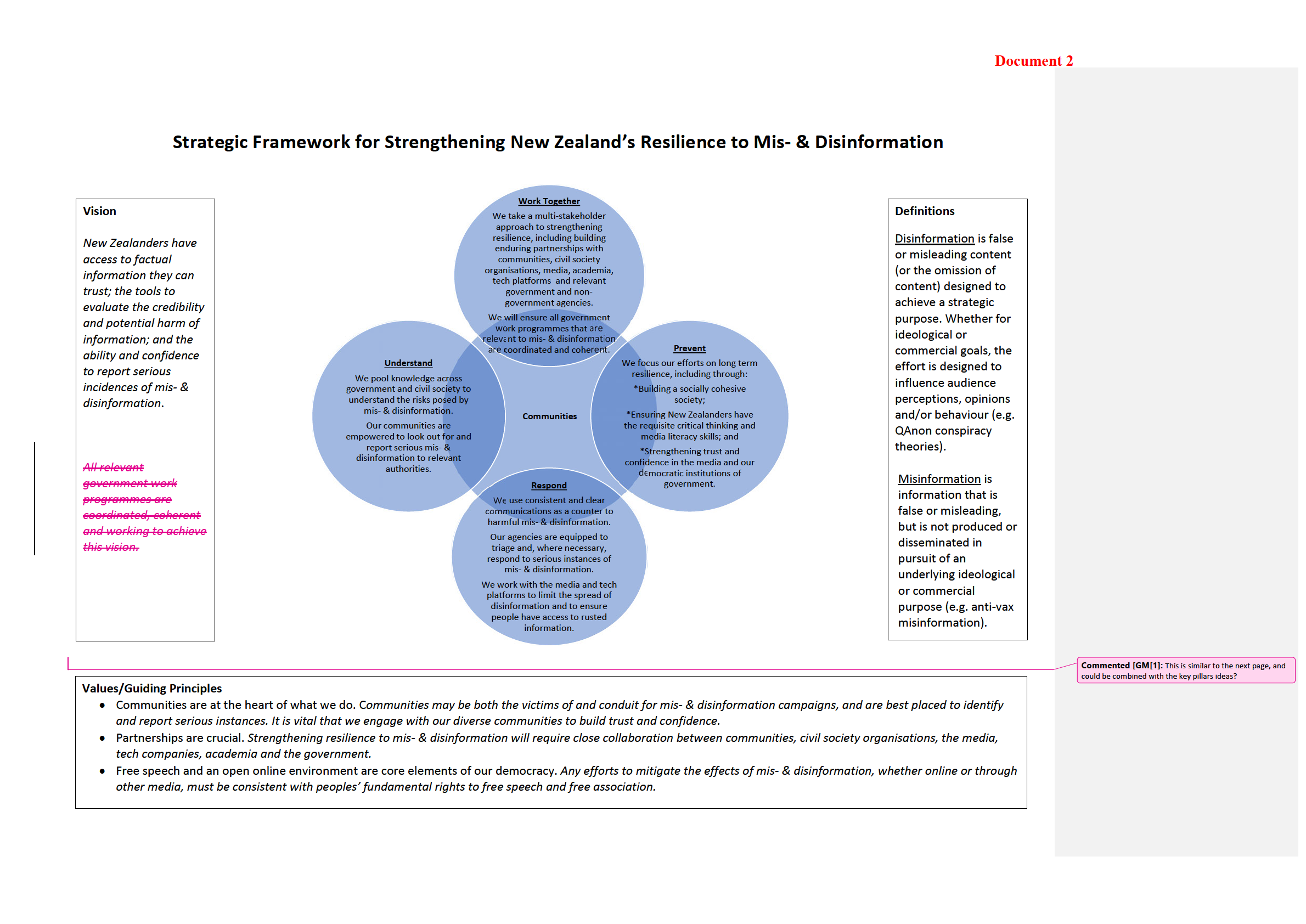

Develop a strategic framework for Cabinet Consideration

18.

We propose that the Interagency Coordination Group develops a strategic

framework for strengthening New Zealand’s resilience to mis/disinformation.

Agencies would look to take this to Cabinet for endorsement in early 2021 (noting

Released

that the pre-Christmas period is likely to be filled with RCOI-related issues).

19. Some thinking has already been done by agencies, and subject to Cabinet approval, a

strategic framework might build on the proposals outlined in the following section.

Key

elements of this framework wil need to address the public and statutory mandates

13

RESTRICTED

RESTRICTED

for monitoring and addressing the disinformation problem, as well as the potential

financial implications for agencies.

20. It wil be essential that this process includes close consultation with academia, civil society

organisations, the media council, and other key public stakeholders to ensure it

encapsulates the views and experience of experts, is transparent, and achieves public

buy-in. It wil also be vital to ensure that this work is appropriately connected to other

relevant national security and social inclusion workstreams. As noted in the Report of the

Royal Commission of Inquiry into the Terrorist At ack on the Christchurch Masjidain, it is

important to recognise that everyone in society has a role in making New Zealand safe

1982

and inclusive.

Possible elements of a strategic framework on disinformation

Act

Encourage the creation of a multi-stakeholder forum

1.

Agencies propose engaging with non-government partners and encouraging them

to convene and lead a wider stakeholder group to consider and address the

challenges posed by non-state mis/disinformation.

2. Noting the massive complexity of the mis/disinformation problem, and its all-of-society

impacts,

a col aborative multi-stakeholder forum –

which brings together all

Information

relevant civil society and academic experts, independent organisations, social

media and tech platforms as wel as relevant government agencies – would be

preferred. A list of potential participants is included at Appendix Three.5

3. Recognising the range of research, awareness and counter-disinformation activities

already being undertaken by media organisations, tech platforms and civil society

Official

groups/individuals, there is a wealth of expertise within New Zealand that could usefully

be leveraged to build the country’s resilience to mis/disinformation.

the

4. In many, if not most, cases, these organisations wil be better placed than government

agencies to publicly counter the effects of disinformation in New Zealand. This has been

demonstrated recently in efforts from scientists, academia and the media to provide a

steady stream of factual information to counter fake news.

under

5. It wil be important, therefore, that any such multi-stakeholder forum be non-regulatory in

nature and that it builds and maintains the public trust. By virtue of its diverse membership,

such a forum wil also be well placed to create a forward calendar of key events and issues

that might be subject to mis/disinformation, as well as to engage in additional outreach to

independent experts, community leaders, key influencers and media.

Released

5 We are aware that a number of academics have already partnered with NetSafe, media and civil society

organisations to col ectively look at the disinformation problem. In early discussions, they are supportive of an idea

to widen this into something like a multi-stakeholder forum.

14

RESTRICTED

RESTRICTED

Develop guiding principles to inform how we counter mis/disinformation

6. It is clear from available research and international experience that efforts to mitigate the

effect of disinformation must be based on principles of transparency, integrity,

accountability and stakeholder participation. They must also uphold the principles of a

free, secure and open internet, privacy and New Zealand’s human rights commitments,

including the freedom of expression.

7. For this reason, and noting the examples of the European Commission and the OECD,

the

lead agencies, in consultation with the multi-stakeholder forum, wil develop

and refine a set of Guiding Principles for mitigating non-state mis/disinformation. 1982

8. Recognising that mis/disinformation is not a problem that government can “fix”, these

principles might build on the guiding principles and values underpinning New Zealand’s

Act

Cyber Security Strategy6 and InternetNZ’s Internet Policy7.

Establish a monitoring function

9. Our ability to understand and if necessary combat mis/disinformation narratives in the

future depends on us establishing the mandate and capability to monitor for harmful

mis/disinformation online, and establishing baselines of such information in social media

now that we can track against later on.

Information

10. New Zealand government agencies do not generally undertake proactive monitoring of

social media for mis/disinformation, though there have been recent examples: the JIG

and the AOG Insights and Reporting Team conducted COVID-19-related monitoring

during the level four lockdown; the NZSIS undertook low-level activity to identify

disinformation relevant to the 2020 New Zealand General Election; and the NZ Police

Open Source Team has increasingly focused on the c

Official riminal and national security end of

the disinformation spectrum.

the

11.s6(a)

in the UK this task is carried out by analysts within the

Cabinet Of ice and the Home Of ice. The equivalent agencies in New Zealand lack the

resources and mandate to do this. There are therefore two plausible ways to establish a

mis/disinformation baseline and to monitor content going forward:

under

i. Establishing a new “fusion cell” to monitor for mis/disinformation. This might be

established in DIA within or alongside the CVE Online programme, and comprise staff

6 https://dpmc.govt.nz/sites/default/files/2019-07/Cyber%20Security%20Strategy.pdf

“To deepen col aboration and take effective action … we wil work in a way that:

• Builds and maintains trust;

Released

• Is people-centric, respectful and inclusive;

• Balances risk with being agile and adaptive;

• Uses our collective strengths to deliver better results and outcomes;

• Is open and accountable.”

7 https://internetnz.nz/policy/ “Internet for al ; Internet for good.”

15

RESTRICTED

RESTRICTED

from a range of agencies with relevant capability (however this would require a

budget); and/or

ii. Procuring from outside of the government sector monitoring and reporting on

mis/disinformation in the New Zealand social media environment. There are some

universities, think tanks and providers who likely have the capability to do this for us

s9(2)(j)

iii. A possible mixture of both options.

1982

12. For the same reasons that a multi-stakeholder approach is the suggested approach

, it

may be preferable for social media monitoring to be primarily undertaken by non-

governmental, non-commercial partners. Perception issues around government

Act

agencies carrying out broad monitoring of social media platforms could serve to reinforce

conspiracy theories and narratives of state surveil ance, censorship and enforcement.

13. There is also a mandate issue for agencies that would seek to monitor/assess, and this

would require some careful policy work to address. The interagency group could consider

the models employed by Australia and the UK, which have both stood up ‘research’ teams

to monitor for disinformation, looking at how these operate and under what authorisation

and oversight. This work would further benefit from multi-stakeholder input on the social

licence required to proceed.

Information

14. Non-government partners already have the capability to combine data analytics and

discourse analysis to highlight key trends and emerging issues, broken down by theme,

geographic area and by demographic. They are also more likely to have or source the

capability to look across dif erent language media. It may be possible for regular

Official

summaries of key trends and data on pre-agreed risk areas to be produced by these

partners for use by the multi-stakeholder forum and government agencies. This would

also be consistent with a whole-of-society, modern deterrence approach to addressing

the

this problem.

15. Drawing on the capabilities of non-government partners may require a certain level of new

funding, however there are recent COVID-19-related examples of non-government

organisations accessing ex

under isting funding pools to monitor and analyse disinformation.

It

is recommended that the interagency group explores procurement and funding

mechanisms to assess whether anything further would be required.

Create a policy framework, including for assessments and referrals

16. The mis/disinformation spectrum is a broad one, and while most instances of it wil be

content that does not stray into il egality, may be somewhat socially acceptable and often

wil constitute political discourse, there wil be instances when disinformation crosses into

Released

il egal or dangerous activity. The incitement to attacks against cell towers are a recent

example.

17. That said, mis/disinformation is an online content area where significant harm can be

caused by otherwise legal material. Compared with content and harm types such as Child

16

RESTRICTED

RESTRICTED

Sexual Exploitation and Abuse (CSEA) material, or Terrorist and Violent Extremist content

(TVEC), mis/disinformation features a much wider spectrum of ‘grey’ content, where the

harm caused can be dif icult to determine as tropes and memes can be used to deliver

coded messages.

18.

The interagency group should therefore work to develop a policy framework for

identifying what areas should be monitored for disinformation and which issues,

based on regular trends reporting, wil require more in-depth analysis and

assessment.

1982

19. s9(2)(g)(i)

Act

20.

The interagency group wil also develop a detailed framework for referrals, where

Information

an instance of mis/disinformation meets a specific threshold (statutory or

contractual) that requires a more direct response. For example:

• A state-sponsored disinformation campaign, to be referred to the Strategic

Coordinator for Foreign Interference and the intelligence agencies;

Official

• Disinformation used as a tool for radicalisation, to be referred to DIA, the NZ Police

and the intelligence agencies;

• Election-related disinformation, to be referred to the Electoral Commission;

the

• Disinformation that inspires or supports criminal activity, to be referred to the NZ

Police (high tech crimes unit, OS monitoring unit, etc.).

Other referrals may also be required: to the tech platforms themselves, the Race

Relations or Human Rights Commissioners, the Ministry for Social Development, etc.

under

Clear guidelines should be established to inform who refers, to whom, for what purpose

and under what circumstances.

21. More broadly,

the interagency group wil consider a range of other policy

implications relating to the mitigation of mis/disinformation. For example:

• Whether interventions for mitigation of mis/disinformation could be investigated as

Released

part of the proposed DIA/MCH review of media content regulation;

• a Code of Good Practice that could be agreed with media organisations (as has been

established by the European Commission with European media organisations);

• work to support to the use or development of technology to counter deep fakes; and

17

RESTRICTED

RESTRICTED

• consideration as to whether a separate disinformation risk profile is required, or if

mis/disinformation should be considered as a dimension of other nationally significant

risks.

Develop holistic strategies for building resilience to mis/disinformation

22. Two of the key tools for building New Zealand’s resilience to disinformation wil be through

the effective coordination of

clear and proactive public communications, and a focus

on

longer term education and social inclusion that leverages work already underway

in schools around active citizenship and online safety.

1982

23. One of the key lessons learned during the COVID-19 pandemic has been the importance

of timely, clear and coordinated public information, delivered through identifiable and

trusted channels. During the Auckland lockdown, this understanding became even more

Act

important, with the need for nuanced, community-specific messaging from trusted

advisors.

24. This concept is equally applicable to other risk areas prone to mis/disinformation. But in

order to ensure a coordinated approach to public communications,

lead agencies wil

need to work with the multi-stakeholder forum to develop a communications

framework. This would not need to be overly prescriptive but could ensure that agencies

do not respond to disinformation in an ad hoc fashion. For example, the UK has produced

a “Tool Kit” for agencies to address and respond to disinformation. This might be a useful

Information

option for helping agencies to calibrate their communications.

25. Key elements of this framework could include:

• That where possible public communications should be proactive, not reactive.

The

Official

aim should be to build trust ahead of time (e.g. a proactive information campaign on

COVID-19 vaccines) rather than to respond to or shutdown disinformation or its

proponent. Reacting to disinformation can serve to validate rather than counter it.

the

• That where possible public communications should be collaborative – i.e. developed

and delivered in partnership with non-government entities, independent experts and

community leaders.

Government-driven narratives are not always the most effective

communication tools, and they can reinforce conspiracy theories about state control.

under

26.

Another vital part of equipping the population to recognise and manage

disinformation is to use disinformation awareness campaigns (e.g. Netsafe’s “Your

News Bulletin”)

and broader education strategies to develop public understanding of

disinformation. Improving science and numeracy literacy has been shown to reduce

susceptibility to mis/disinformation. And strategies successfully implemented in Finland

and Sweden include the introduction of critical thinking elements into all aspects of the

school curricula. The purpose of this is to build, from an early age, the ability of people to

Released

18

RESTRICTED

RESTRICTED

distinguish between authentic and false narratives and have the tools to question and fact

check.8

27. New Zealand could implement a similar strategy in order to meet the long-term goal of

building resilience to mis/disinformation. We already have ‘active citizenship’ and online

safety initiatives in schools, and it may not require much from a curriculum perspective to

extend these to include social media literacy and critical thinking tools. Additional funding

may, however, be required. It wil be important for the Ministry of Education and

organisations such as Netsafe (both members of the Online Harms Prevention Group)

and SeniorNet to be part of the multi-stakeholder Forum.

1982

Act

Information

Official

the

under

Released

8 An added benefit of these programmes, which fit within the scope of what is sometimes termed ‘modern

deterrence’, is that they can also build resilience to radicalisation and can provide a boost to other social cohesion

programmes.

19

RESTRICTED

RESTRICTED

APPENDIX ONE: The mis/disinformation problem

Mis/disinformation9

gives rise to and impacts several national security risks…

1. While dis and misinformation are not new phenomena - and are not neatly confined to the

online environment - the internet has decentralised the production and dissemination of

information, amplifying the volume, speed, and reach of mis/disinformation.

2. Social media presents a particularly effective and low-cost enabling platform, from which

bil ions of users source their news. Echo-chamber dynamics, ‘social proof’ and the pursuit

1982

of viral content are manipulated by state and non-state actors to place mis/disinformation

into online spaces and boost their prominence. This can be further amplified by users with

pre-existing biases and can raise doubts among those not already predisposed

Act to

conspiracies. Users with lower levels of formal education or literacy in a specific topic, as

well as those communities with an historic distrust in government, are especially

susceptible.

3. The significance of mis/disinformation for national security became particularly apparent

during the 2016 US Presidential election, when disinformation about candidates and

policies was spread by malicious actors to interfere with the electoral process. It has been

further highlighted by an explosion of disinformation during the COVID-19 pandemic (“the

Information

infodemic”). State actors have used disinformation campaigns to divert blame, showcase

the ‘failings’ of other systems, and are exploiting the situation to achieve longer-term

strategic goals. Non-state actors, meanwhile, have used mis/disinformation to undermine

public health narratives, including by spreading views about the cause and origin of the

pandemic (e.g. claiming it is a bioweapon, or linking it to 5G), questioning the political

motives of lockdowns and mask use, and promoting conspiracy theories about future

Official

vaccines.

the

4. s6(a), s9(2)(g)(i)

And as

mis/disinformation continues to grow and technology evolves (e.g. deep fakes), people

may find it increasingly difficult to discern fact from falsehood. This confusion over what

under

is true could not only result in individuals making misinformed choices in their own lives,

it could also have significant consequences for national security:

• the politicisation of scientific fact (e.g. on a range of issues including pandemics and

climate change) contributes to anti-intellectualism and can

undermine effective

policy responses, including on public health responses to COVID-19;

Released

9

Disinformation is false or misleading content (or the omission of content) designed to achieve a strategic

purpose. Whether the actor producing and disseminating the disinformation is pursuing ideological or commercial

goals, the effort is designed to influence audience perceptions, opinions and/or behaviour (e.g. QAnon conspiracy

theories).

Misinformation is information that is false or misleading but, unlike disinformation, is not produced or

disseminated in pursuit of an underlying ideological or commercial purpose (e.g. anti-fluoride information).

Malinformation is information that may be based in reality, but is spread with the intent of causing harm.

20

RESTRICTED

RESTRICTED

• mis/disinformation about specific groups of people

can create and amplify social

divisions, challenge national values, foster extremist views and lead to

radicalisation, break down social cohesion and, in some cases, incite violence

towards minority groups;

• mis/disinformation and conspiracy theories can permanently

damage the reputation

of elected officials and/or their policies, undermining the integrity of elections

and democracy more broadly;

• mis/disinformation and conspiracy theories can have a corrosive effect,

undermining

trust in public institutions and the social contract, with attendant consequences

for policy making and service delivery;

1982

• mis/disinformation can have an

impact on critical infrastructure: conspiracy

theories during the COVID-19 pandemic led people in a number of countries,

including New Zealand, to at ack 5G and other cell towers. A recent British report

Act

noted the possibility of disinformation being used to overload the power grid through

the false promotion of cheap power during peak hours;

• mis/disinformation can also have

economic repercussions, including through

influencing the stock market and investment decisions. For example, a 2013 tweet

from the hacked account of the Associated Press claiming that former President

Obama had been injured in an explosion, resulted in a brief $130bil ion devaluation

of the US stock market; and

• mis/disinformation can also

undermine confidence in the online environment,

Information

directly threatening our ability to achieve the vision in the cyber security strategy that

New Zealand is confident and secure in the digital world, enabling New Zealand to

Thrive Online. In addition to the attendant cybersecurity issues this gives rise to, it

can also have significant economic and service delivery impacts, affecting uptake of

digital technology.

Official

5. Amongst our closest security partners, oversight of the disinformation issue is coordinated

by several dif erent agencies and responses vary from state-controlled counter-narratives

through to funding for civil-society initiatives. A summary of their responses is attached at

the

Appendix Two.

…and infodemics are spreading in New Zealand

under

6. New Zealand’s relatively high trust in the mainstream media and government institutions

has largely inoculated the general population from believing disinformation. However, this

did not create total protection against the increase in COVID-19 and elections-related

disinformation that circulated online following the August lockdown in Auckland.

7. The internationalisation of disinformation emanating from the US and/or amplified on US-

based platforms is one factor in this. Anti-mask and anti-lockdown narratives, often

Released

couched in broad human rights and basic freedoms terms (and often grounded in

narratives linked to the US Constitution), found fertile ground amongst followers of a few

influencers, political parties and some church congregations.

21

RESTRICTED

RESTRICTED

8. Some of these theories included that the government was intentionally withholding

information from the public, that the outbreak in Auckland was worse than reported, that

the government was “utilising” the outbreak to impose martial law or otherwise erode

human rights, and that the outbreak was intentionally planned to manipulate the election.

9. The significance of this was noted by the AOG Insights & Reporting Team:

s9(2)(g)(i)

. Combined with an increase in the number of

anti-vax views being expressed and shared on social media platforms, this highlights that

1982

there remains a significant risk for the rollout of a COVID-19 vaccine.

10. More broadly, there was also during this period a proliferation of New Zealand-based

Act

Facebook groups promoting far-right QAnon theories alleging that the world is run by a

cabal of Satan-worshiping paedophiles who are plotting against President Trump while

operating a global child sex-trafficking ring. The US “documentary”, ‘Plandemic’, which

claims a secret society of bil ionaires is plotting to gain global domination by controlling

people through a COVID-19 vaccine, has also been widely shared in New Zealand.

11. Many people sharing these conspiracies may have good intentions, but they also have a

fundamental distrust of government, “experts” and the media.10 This is also evident within

New Zealand’s Māori and Pasifika communities, where an intergenerational distrust of

Information

government and media, plus lived experience of systemic neglect and racism are all

factors that have enabled false information to gain traction. One example of this was the

rumour that those who tested positive for COVID-19 would have their children removed

from them by Oranga Tamariki.

Official

12. There is potentially a Treaty of Waitangi element to this, with racist disinformation

narratives, and disinformation about the Treaty itself, of concern. Additionally, through

engagement with Māori on other digital issues (e.g. Budapest Convention accession,

the

cloud computing and data governance) several partners have identified susceptibility to

disinformation and conspiracy theories among Māori communities as an area of particular

concern.

13. Gendered disinformation has received less public attention, but also poses a significant

under

threat internationally and in New Zealand. A recent UK/US report has found that online

spaces are being systematically weaponised against women leaders, with politically

motivated gendered stereotypes and personal attacks posing a serious threat to women’s

equal political participation.

Released

10 A number of studies also suggest that poor science and numeracy literacy is linked to greater susceptibility to

conspiracy theories and fake news.

22

RESTRICTED

RESTRICTED

APPENDIX TWO: The international dimension 1. Amongst our closest security partners, work to counter disinformation is coordinated by a

number of dif erent agencies, and responses vary from state-controlled counter-narratives

through to funding civil-society initiatives. This work is evolving very quickly and, as such,

below is only a very brief snapshot of the various parts of Five Eyes’ governments that

are addressing the issue of mis/disinformation. We wil be engaging more closely on this

issue in 2021 to learn more about partner approaches.

2. In Australia, while efforts are underway to understand the domestic social and behavioural

impacts of disinformation, the focus has predominantly been on state-sponsored

1982

disinformation:

•

Counter-disinformation taskforce hosted by DFAT, set up in June 2020. Focus

Act ed

on tracking and responding to mis/disinformation and malign messaging in the Pacific

and South East Asia

s6(a)

3. In Canada, there has been a dual track approach:

Information

•

Rapid Response Mechanism Canada (RRM), part of the G7 RRM, undertakes

focused research to understand potential foreign threats against Canada, and to

identify tactics and trends. Member also of the Security and Intelligence Threats to

Elections (SITE) task force.

•

Canadian Heritage has the lead on non-state disinformation and takes a multi-

Official

stakeholder approach in working with civil society to address the problem through

education and awareness campaigns.

•

Public Safety Canada also hosts the

Canada Centre for Community Engagement

the

and Prevention of Violence (Canada Centre), which promotes coordination,

planning, funding and research, and supports interventions.

4. In the UK:

under

•

The Department for Digital, Cultural, Media and Sport coordinates the British

response to disinformation through the interagency Counter Disinformation Cell.

•

A Rapid Reaction Unit within the Cabinet Office was set up to respond specifically

to COVID-19-related disinformation issues, including through working with tech

companies to block harmful mis/disinformation.

•

The Home Office,

through its

PREVENT and RICU teams, has a monitoring function

working on online TVEC and radicalisation, that has also focused closely on

Released

disinformation over the past year or so.

•

The Government Communication Service created “RESIST”, a counter-

disinformation toolkit designed for both the government and private sector to help

prevent the spread of disinformation.

23

RESTRICTED

RESTRICTED

5. In the US:

• There are a range of agencies involved in the issue of disinformation, including the

intelligence community, the Department of Homeland Security, the Department of

Justice and the State Department.

• Constitutional conventions around freedom of expression and jurisprudence

complicate the issue of disinformation, as does the current state of political discourse

and deeply entrenched political polarisation. This makes disinformation a complicated

issue to address in the US.

We are likely to be invited to join an increasing number of international actions

1982

6. New Zealand has received numerous requests to share reporting, analysis and

approaches on countering mis/disinformation, from a range of likeminded partners in both

Act

bilateral and multilateral contexts (in Five Eyes (FVEY) fora, NATO and the Canadian-

hosted G7 RRM).

7. We joined public statements made by the Freedom Online Coalition11 in May and

November. The statement in May read (inter alia):

[T]he FOC is concerned by the spread

of disinformation online and activity that seeks to leverage the COVID-19 pandemic with

malign intent. This includes the manipulation of information and spread of disinformation

to undermine the international rules-based order and erode support for the democracy

and human rights that underpin it. Access to factual and accurate information,

Information

including

through a free and independent media online and offline, helps people take the necessary

precautions to prevent spreading the COVID-19 virus, save lives, and protect vulnerable

population groups. More broadly, FOC members have signalled a need to keep working

on disinformation issues.

Official

8. At the 3 September meeting of the Aqaba Process, partners recognised the need for

collective work on disinformation issues, recognising the corrosive effects of

disinformation on public safety.

the

9. We expect that likeminded partners wil increase of ers to work together on further actions,

statements, or attributions relating to disinformation. Developing a stronger domestic

approach to mis/disinformation would effectively and credibly support our collective

under

understanding and mitigation of the risk.

10. This international work may involve engaging closely with a range of those partners most

constructively engaged in this work. Given the sensitivities involved in working on

disinformation, the principles applied domestically may also stand us in good stead for

international engagement. It is likely that the pool of those able to work well on this issue

would be relatively small at present, composed of a subset of liberal democracies that

belong to the FOC, and where disinformation has not already substantially undermined

Released

the ability of institutions to engage effectively.

11 A partnership of 32 governments, of which New Zealand is one, working with civil society and the private

sector to support Internet freedom.

24

RESTRICTED

RESTRICTED

s6(a)

11.

12. As with domestic efforts, multi-stakeholder engagement wil be critical in working

1982

internationally on this issue. We are potentially well-placed to engage on this, building on

relationships established through the Christchurch Call. Major technology firms have

indicated some interest in further work with New Zealand on disinformation issues. So

Act

too have civil society organisations prominent in this area (Global Network Initiative,

Global Disinformation Index, Witness, the Web Foundation), many of which participate

actively in the Advisory Network to the Christchurch Call. Such engagement provides an

important opportunity to understand and engage in international work on combatting

disinformation, in ways that are consistent with New Zealand’s approach to internet

governance and international human rights law.

13. A key issue in this international discussion is the absence of a widely accepted forum for

working through disinformation issues. s6(a)

Information

It

nonetheless indicates sufficient interest and urgency directed to constructive multi-

Official

stakeholder work on disinformation that it might be possible, with careful work, to build a

stronger platform for collective action on disinformation.

the

under

Released

25

RESTRICTED

1982

Act

Information

Official

the

under

Released

RESTRICTED

s9(2)(f)(iv)

Te Puni Kōkiri

Te Arawhiti

Ministry for Pacific Peoples

Ministry of Social

Development

Treasury

1982

Cert NZ

Act

Information

Official

the

under

Released

27

RESTRICTED

1982

Act

Information

Official

the

under

Released

1982

Act

Information

Official

the

under

Released

1982

Act

Information

Official

the

under

Released